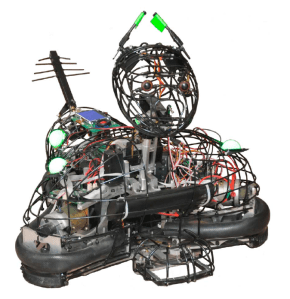

Photo of MogiRobi used with permission of Gabriella Lakatos.

In fiction and film, robots speak like we do. Sure, Star Wars’ R2-D2’s vocabulary was limited to spunky squeaks. But C-3PO comported himself with the decorum of a British butler, and had the service class accent down pat. As voiced by Joan Rivers, Spaceballs’ Dot Matrix tended to her Druish Princess while prattling in Brooklynese.

Apple’s Siri, Android’s Contana, and Amazon’s Alexa seem “social,” and they all have voices that communicate a steady stream of dignity and patience. What they don’t have is a grasp of the nuance of language. (How many times has my iPhone said to me, “I don’t understand the question?”) And they also don’t have personality or any way to communicate emotional states like empathy, fear, and affection.

Humans communicate emotions with an unfathomably deep vocabulary of words. They also use a language of facial expressions and physical gestures that is itself almost impossibly multi-tonal, and remains for the time being beyond the ability of any programmer to encode in a socially active, service robot. Even our physical semantics are beyond reduction; they’re personality-driven, culture-bound, and sometimes so irony-laden that distilling them into a code for embedding into robots would inevitably produce errors—ones that might make those robots seem creepy or, worse, menacing.

And so it’s perhaps not surprising that a team of scientists from universities in Budapest and a research group in London has searched for a simpler model than “human” in constructing a social robot capable of communicating.

Source: Creative Commons License from Beverly and Pack on Flickr. See link below.

Given that robots may one day be expected to serve as nannies, housekeepers, and even best friends, the researchers imagined that there might be a use for robots that present as—well, as man’s best friend, at least behaviorally. Certainly from a programming point of view, the broad strokes of dog behavior are familiar to many humans. Furthermore, the behavioral language of dogs may be more easily deconstructed (and re-constructed for use in a robot) than that of humans.

Publishing in the scholarly journal PLOS One, the scientists reported on two experiments testing whether humans are able to understand the physical language of robots that behave like dogs.

Photo of MogiRobi used with permission of Gabriella Lakatos.

For the experiments, researchers in the Department of Mechatronics, Optics, and Information Engineering of Budapest’s University of Technology and Economics built created MogiRobi, a remote-controlled ersatz robot for which researchers at the Comparative Ethology Research Group of Budapest’s Eötvös Loránd University specified a set of common, emotionally expressive dog behaviors. (Unknown to the study’s test subjects, MogiRobi was controlled remotely by a hidden experimenter.)

One at a time, 48 test subjects entered a room in which MogiRobi was stationed at the wall opposite from the door. Two colored tennis balls had been placed in a bag that was fixed to the handle of the door. When a test subject entered the room, MogiRobi stood attentively with its “ears” pointed upward in a standard greeting stance. When the test subject called the dog’s name, MogiRobi turned its head toward the subject and wagged its antenna (tail). When the test subject commanded MogiRobi to come, MogiRobi approached the subject with wagging tail, and then it lowered its tail and ears tentatively.

The test subject was directed to use the two balls to play with MogiRobi. Unknown to the test subject, the robot “preferred” one of the balls. When playing with its preferred ball, MogiRobi displayed the “happy dog” behavior of tail and ears pointing up, and tail wagging. When playing with the other ball, MogiRobi stopped wagging its tail, moved closer to the ball, and then “fearfully” lowered its tail and ears. After that initial inspection, it kept as far away as it could from the ball. If the test subject threw the ball, MogiRobi moved to the other side of the room.

Afterwards, when asked open-ended questions like, “Why did you play more with that ball?’’ almost 96% of subjects said that MogiRobi had preferred one ball over the other. And most test subjects said that MogiRobi had successfully conveyed emotions like happinesss and fear.

In a second experiment, MogiRobi’s hidden human controller had the robot behave in the way many dogs do after they’ve broken a rule. While dogs’ “guilty looks” may have nothing to do with guilt and everything to do with fear of punishment, the Budapest researchers wanted to know if MogiRobi could look guilty enough for test subjects to assume that a rule had been broken.

One by one, test subjects entered the experiment room and called MogiRobi to come. MogiRobi responded with the same behaviors as in the first experiment. Then the test subjects were asked to teach MogiRobi how not to knock over a bottle that was placed behind a barrier. MogiRobi showed each test subject that it had learned the trick. When the test subject left and then re-entered the room, he or she could not see behind the barrier to determine whether the bottle was still standing. For half of them, MogiRobi’s greeting behaviors included guilty looks. For the other half the greeting did not. Twenty-one of the 22 test subjects that were greeted with guilty behaviors guessed correctly that the bottle had been knocked over. Only half of the subjects that were greeted with normal greeting behavior guessed correctly.

The impressive difference between the two groups in the number of correct guesses led the researchers to conclude that people can accurately attribute emotions to a robot behaving like a dog, and that they take into account the robot’s apparent emotions when interacting with it. Furthermore, the researchers reported that humans interacted with the emotionally expressive robot as though it had real emotions. In both experiments, the test subjects talked to MogiRobi. They petted it. They praised it.

I like dogs. Many people do. It could be that people like me would also like robots that behave like dogs. As lead researcher Gabriella Lakatos pointed out in an overseas Skype interview, “Social robots that behave like dogs wouldn’t necessarily need to look like dogs. Ideally their form would be determined by their function. But regardless of their shape, if the robots behaved like dogs, humans might understand them.” Lakatos also explained that, if dog-like behaviors communicate intention and emotion well enough, for some social robots the expense and complexity of verbal language might be dispensed with entirely.

Hold on there. At least Siri and Contana need to speak. Because, really. Who wants their phone wiggling with glee and rushing after colored balls? But I can see an advantage to me, a potential user of socially competent and fully mobile robots, in relying on nonverbal “interspecies” communication. For starters, if a robot behaving like a dog gets a gesture wrong, it might seem funny or cute. But it would be downright creepy to have a highly verbal, humanoid robot smile broadly while delivering to me the message that, oh, by the way, Ma’am, the apocalypse has arrived.

I’m always trying to think ahead . . . .

_____

Gabriella Lakatos, Márta Gácsi, Veronika Konok, Ildikó Brúder2, Boróka Bereczky, Péter Korondi, Ádám Miklósi, “Emotion Attribution to a Nonhumanoid Robot in Different Social Situations,” PLOS One, December 2014.

Photo of real dog used under the Creative Commons license by generous permission of Beverly and Pack on Flickr.